Analyzing the Threat to Speech Privacy from Smartphone Motion Sensors

In this research, we analyze the effect of speech on smartphone motion sensors, in particular, gyroscope and accelerometer. We test the motion sensors in some common scenarios with a smartphone and speech generator/human speaker.

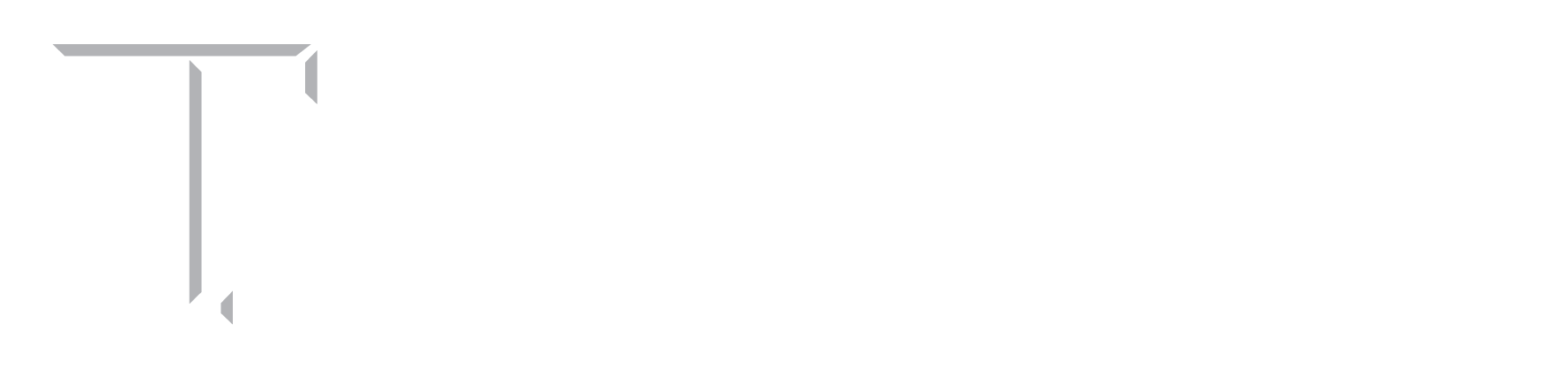

Figure 1: Experiment setup depicting a) loudspeaker and smartphone sharing same surface, b) loudspeaker and smartphone placed on different surfaces but close to each other and c) human speaking near the smartphone placed on a surface.

We observe that smartphone motion sensors may indeed be susceptible to speech signals, where the smartphone and the loudspeaker (with subwoofers) generating the speech signal share a common surface. This effect may also allow for speaker and gender identification as demonstrated in existing works. However, we propose that the recorded effect on the motion sensors is possibly due to conductive vibrations that travel across the shared surface, instead of acoustic vibrations travelling through air.

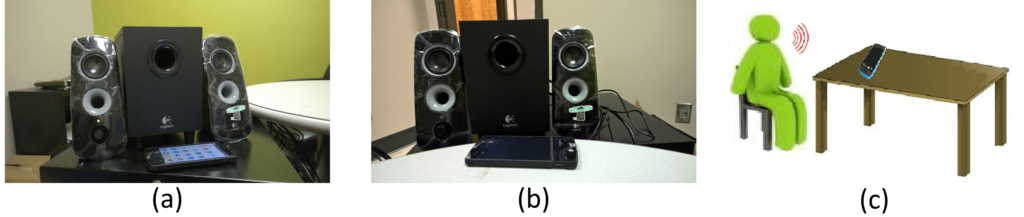

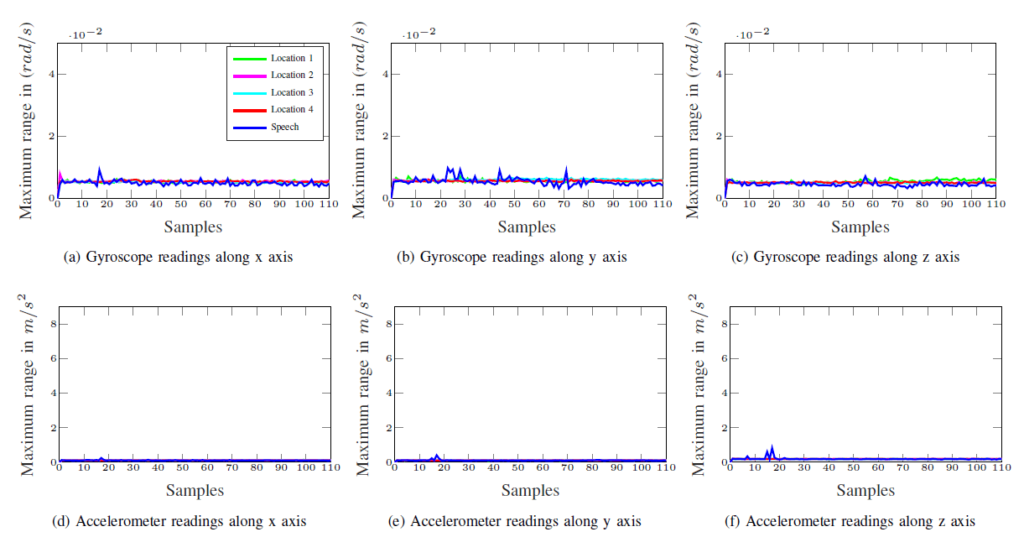

Figure 2: Comparison of sensor behavior under ambient locations and in presence of speech in scenario depicted in Fig. 1(b). Maximum variance in sensor readings (in absence of speech) at quiet locations 1, 2, 3, 4 is plotted alongside maximum variance in sensor readings (in presence of speech) to determine the effect of speech on sensors. Due to surface vibrations from loudspeaker, there is noticeable effect on accelerometer readings that pushes the blue line plot significantly higher than the line plots of quiet locations (denoted by green, magenta, cyan, and red line plots).

We study the issue of smartphone motion sensors’ sensitivity in multiple scenario that range from testing the effect of laptop and phone speakers to testing live human speech spoken directly over the smartphone. The scenario designs were inspired from real life situations where speech privacy could potentially be compromised using side channel attacks via motion sensors. Our experiments have shown that average laptop speakers and speakers found on smartphones lack sufficient loudness to transmit conductive vibrations across the shared surface to the smartphone motion sensors.

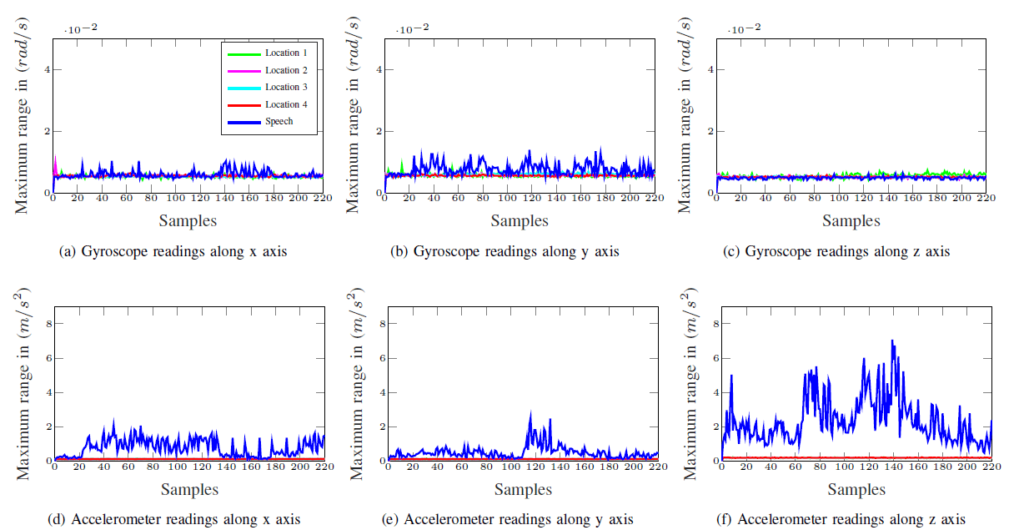

Figure 3: Comparison of sensor behavior under ambient locations and in presence of speech in scenario depicted in Fig. 1(a). Maximum variance in sensor readings (in absence of speech) at quiet locations 1, 2, 3, 4 is plotted alongside maximum variance in sensor readings (in presence of speech) to determine the effect of speech on sensors. Due to surface vibrations from loudspeaker, there is noticeable effect on accelerometer readings that pushes the blue line plot significantly higher than the line plots of quiet locations (denoted by green, magenta, cyan, and red line plots).

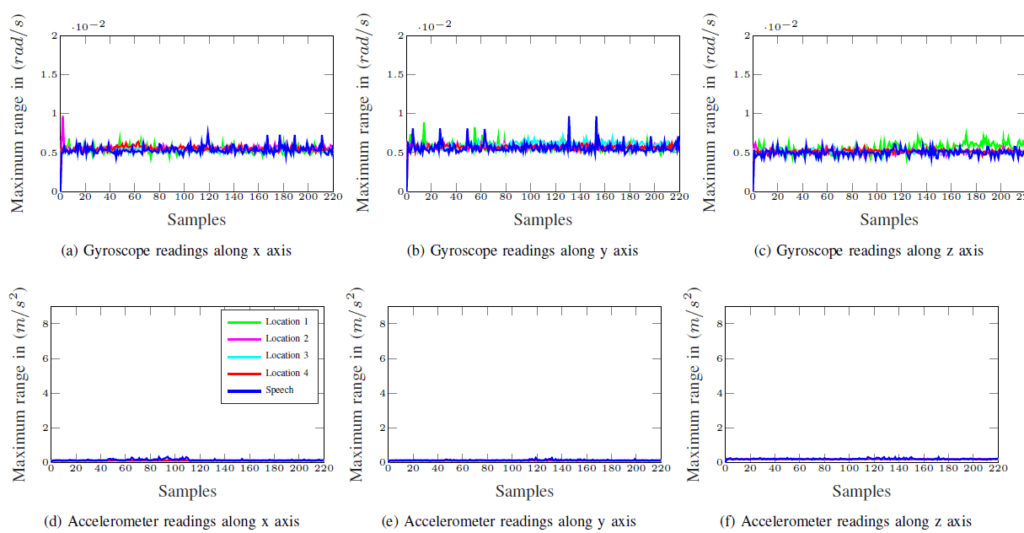

In the case of live human speech, we consider two possible scenarios that involve normal human speech and loud human speech spoken near the smartphone in an attempt to influence the smartphone motion sensor. We show that neither speech type is strong enough to affect the embedded smartphone motion sensors through acoustic vibrations. Thus, the results suggest that the threat to speech privacy from smartphone motion sensors may only be applicable in limited scenarios.

Fig. 4: Comparison of sensor behavior under ambient locations and in presence of speech in scenario depicted in Fig. 1(c). Maximum variance in sensor readings (in absence of speech) at quiet locations 1, 2, 3, 4 is plotted alongside maximum variance in sensor readings (in presence of speech) to determine the effect of speech on sensors. Due to surface vibrations from loudspeaker, there is noticeable effect on accelerometer readings that pushes the blue line plot significantly higher than the line plots of quiet locations (denoted by green, magenta, cyan, and red line plots).

People

Faculty

Student

- S Abhishek Anand (PhD candidate)

Publication

- Speechless: Analyzing the Threat to Speech Privacy from Smartphone Motion Sensors.

Abhishek Anand and Nitesh Saxena

In IEEE Symposium on Security and Privacy (IEEE S&P; Oakland), May 2018.

[pdf]